import os

os.environ["XLA_FLAGS"] = "--xla_force_host_platform_device_count=8"

from tvbo import SimulationExperiment

import matplotlib.pyplot as plt

import numpy as np

# Load experiment (network + target FC loaded from BIDS automatically)

exp = SimulationExperiment.from_db("EI_Tuning_FIC_EIB_Optimization")

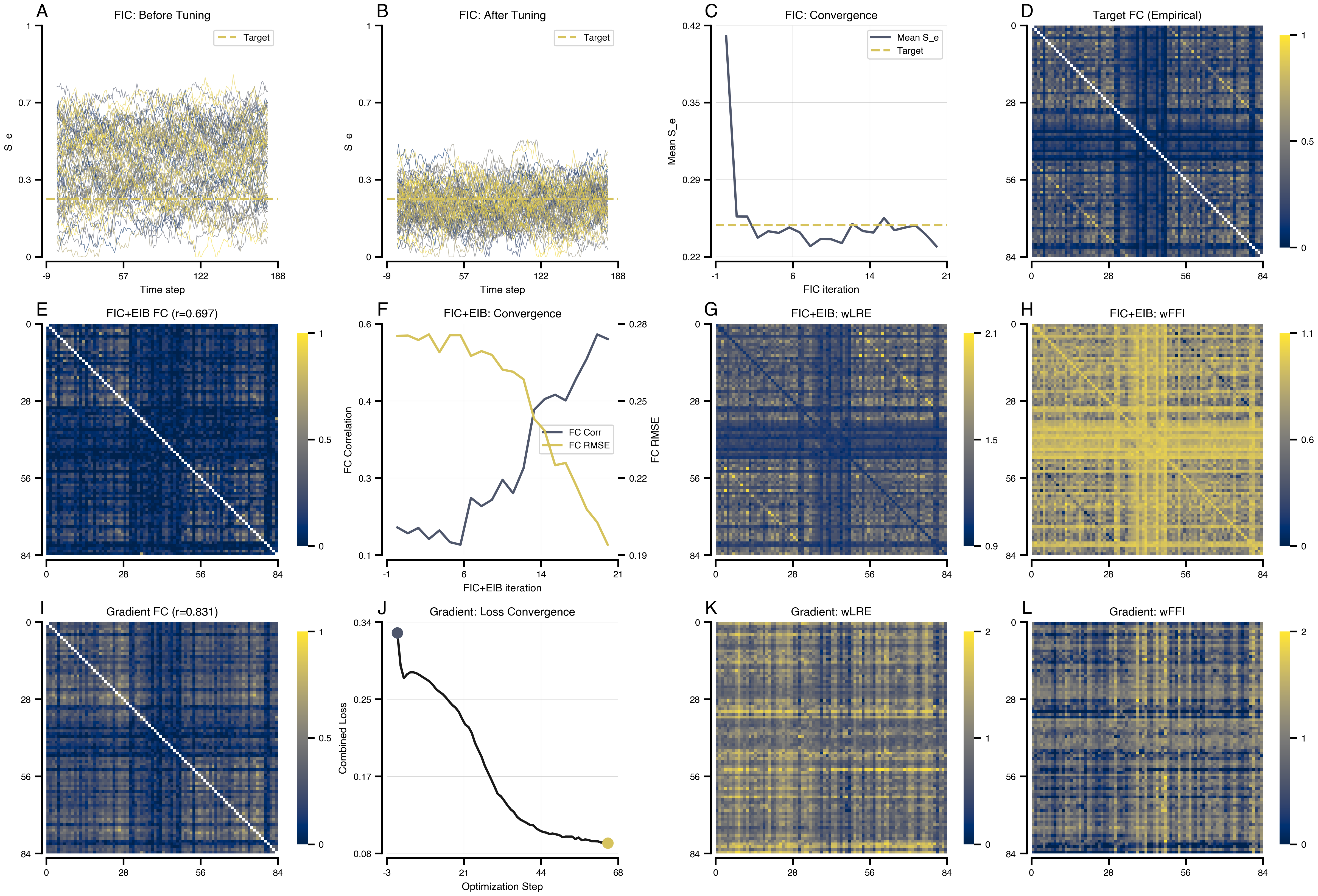

print(exp.render_code('tvboptim'))Excitation-Inhibition Balance Tuning via tvboptim

Reproducing the FIC+EIB tuning workflow from Schirner et al. (2023). Fits local inhibitory weights (J_i) for E-I balance and dual coupling weights (wLRE, wFFI) to match empirical functional connectivity.

results = exp.run("tvboptim")

============================================================

STEP 1: Running simulation...

============================================================

Simulation period: 300000.0 ms, dt: 4.0 ms

Transient period: 300000.0 ms

Simulation complete.

============================================================

STEP 3: Running algorithms...

============================================================

Algorithms to run (dependency order): ['fic', 'fic_eib']

\n>>> Running algorithm: fic (seed=0)

Running fic algorithm for 200 iterations...

10/200: mean_S_e=0.2707, mean_S_i=0.0948, bold=0.8968

20/200: mean_S_e=0.2495, mean_S_i=0.0885, bold=0.8020

30/200: mean_S_e=0.2385, mean_S_i=0.0855, bold=0.7663

40/200: mean_S_e=0.2399, mean_S_i=0.0857, bold=0.7535

50/200: mean_S_e=0.2488, mean_S_i=0.0890, bold=0.8102

60/200: mean_S_e=0.2493, mean_S_i=0.0877, bold=0.8181

70/200: mean_S_e=0.2433, mean_S_i=0.0858, bold=0.8208

80/200: mean_S_e=0.2357, mean_S_i=0.0839, bold=0.7898

90/200: mean_S_e=0.2420, mean_S_i=0.0847, bold=0.8009

100/200: mean_S_e=0.2517, mean_S_i=0.0873, bold=0.7816

110/200: mean_S_e=0.2533, mean_S_i=0.0887, bold=0.8221

120/200: mean_S_e=0.2578, mean_S_i=0.0897, bold=0.7762

130/200: mean_S_e=0.2398, mean_S_i=0.0853, bold=0.8168

140/200: mean_S_e=0.2359, mean_S_i=0.0834, bold=0.7894

150/200: mean_S_e=0.2499, mean_S_i=0.0873, bold=0.8309

160/200: mean_S_e=0.2489, mean_S_i=0.0865, bold=0.8252

170/200: mean_S_e=0.2440, mean_S_i=0.0855, bold=0.8212

180/200: mean_S_e=0.2443, mean_S_i=0.0855, bold=0.8026

190/200: mean_S_e=0.2480, mean_S_i=0.0850, bold=0.7940

200/200: mean_S_e=0.2405, mean_S_i=0.0842, bold=0.7931

fic complete!

\n>>> Running algorithm: fic_eib (seed=0)

(using state from dependency: fic)

Using passed bold buffer (200 samples, using last 150)

Running fic_eib algorithm for 2000 iterations...

100/2000: fc_corr=0.1350, fc_rmse=0.2801, mean_S_e=0.2570, mean_S_i=0.0887, bold=0.8016, fc_inloop=0.0175

200/2000: fc_corr=0.1110, fc_rmse=0.2853, mean_S_e=0.2417, mean_S_i=0.0841, bold=0.7971, fc_inloop=0.0123

300/2000: fc_corr=0.1482, fc_rmse=0.2735, mean_S_e=0.2508, mean_S_i=0.0867, bold=0.8278, fc_inloop=0.0249

400/2000: fc_corr=0.2089, fc_rmse=0.2739, mean_S_e=0.2661, mean_S_i=0.0897, bold=0.8806, fc_inloop=0.0192

500/2000: fc_corr=0.1820, fc_rmse=0.2794, mean_S_e=0.2695, mean_S_i=0.0917, bold=0.8573, fc_inloop=0.0138

600/2000: fc_corr=0.1432, fc_rmse=0.2810, mean_S_e=0.2541, mean_S_i=0.0865, bold=0.8787, fc_inloop=0.0154

700/2000: fc_corr=0.1672, fc_rmse=0.2732, mean_S_e=0.2617, mean_S_i=0.0883, bold=0.8556, fc_inloop=0.0242

800/2000: fc_corr=0.2213, fc_rmse=0.2685, mean_S_e=0.2689, mean_S_i=0.0903, bold=0.9115, fc_inloop=0.0272

900/2000: fc_corr=0.2699, fc_rmse=0.2511, mean_S_e=0.2724, mean_S_i=0.0899, bold=0.9255, fc_inloop=0.0455

1000/2000: fc_corr=0.2264, fc_rmse=0.2561, mean_S_e=0.2491, mean_S_i=0.0818, bold=0.8911, fc_inloop=0.0448

1100/2000: fc_corr=0.3105, fc_rmse=0.2474, mean_S_e=0.2735, mean_S_i=0.0900, bold=0.8965, fc_inloop=0.0496

1200/2000: fc_corr=0.3417, fc_rmse=0.2497, mean_S_e=0.2570, mean_S_i=0.0827, bold=0.8454, fc_inloop=0.0434

1300/2000: fc_corr=0.3620, fc_rmse=0.2392, mean_S_e=0.2541, mean_S_i=0.0811, bold=0.8861, fc_inloop=0.0558

1400/2000: fc_corr=0.4133, fc_rmse=0.2274, mean_S_e=0.2677, mean_S_i=0.0845, bold=0.9010, fc_inloop=0.0677

1500/2000: fc_corr=0.3971, fc_rmse=0.2367, mean_S_e=0.2600, mean_S_i=0.0822, bold=0.8692, fc_inloop=0.0569

1600/2000: fc_corr=0.4001, fc_rmse=0.2384, mean_S_e=0.2630, mean_S_i=0.0813, bold=0.8473, fc_inloop=0.0538

1700/2000: fc_corr=0.4871, fc_rmse=0.2215, mean_S_e=0.2775, mean_S_i=0.0847, bold=0.9188, fc_inloop=0.0689

1800/2000: fc_corr=0.4406, fc_rmse=0.2342, mean_S_e=0.2448, mean_S_i=0.0761, bold=0.8658, fc_inloop=0.0588

1900/2000: fc_corr=0.5188, fc_rmse=0.2043, mean_S_e=0.2619, mean_S_i=0.0798, bold=0.9533, fc_inloop=0.0888

2000/2000: fc_corr=0.5472, fc_rmse=0.1773, mean_S_e=0.2525, mean_S_i=0.0761, bold=0.8173, fc_inloop=0.1284

fic_eib complete!

============================================================

Algorithms complete. Results: ['fic', 'fic_eib']

============================================================

============================================================

STEP 4: Running optimization...

============================================================

Preparing optimization model (t1=300000.0ms, dt=4.0ms, solver=Heun)

Step 0: 0.322668

Step 1: 0.287123

Step 2: 0.273792

Step 3: 0.278946

Step 4: 0.281408

Step 5: 0.281410

Step 6: 0.280226

Step 7: 0.277955

Step 8: 0.275005

Step 9: 0.272384

Step 10: 0.269918

Step 11: 0.267459

Step 12: 0.264796

Step 13: 0.261953

Step 14: 0.259053

Step 15: 0.255887

Step 16: 0.252129

Step 17: 0.249260

Step 18: 0.246927

Step 19: 0.243956

Step 20: 0.239331

Step 21: 0.235034

Step 22: 0.233686

Step 23: 0.230091

Step 24: 0.222207

Step 25: 0.218732

Step 26: 0.219809

Step 27: 0.217955

Step 28: 0.211716

Step 29: 0.204805

Step 30: 0.204738

Step 31: 0.203428

Step 32: 0.195780

Step 33: 0.186489

Step 34: 0.184497

Step 35: 0.183675

Step 36: 0.175692

Step 37: 0.165803

Step 38: 0.165003

Step 39: 0.160964

Step 40: 0.151198

Step 41: 0.147560

Step 42: 0.156845

Step 43: 0.146453

Step 44: 0.137254

Step 45: 0.138594

Step 46: 0.140232

Step 47: 0.130705

Step 48: 0.124505

Step 49: 0.123443

Step 50: 0.121160

Step 51: 0.116373

Step 52: 0.117663

Step 53: 0.117160

Step 54: 0.114675

Step 55: 0.110528

Step 56: 0.112404

Step 57: 0.108646

Step 58: 0.107686

Step 59: 0.107167

Step 60: 0.113084

Step 61: 0.118312

Step 62: 0.104568

Step 63: 0.108190

Step 64: 0.109938

Step 65: 0.112890

Optimization complete.

============================================================

Experiment complete.

============================================================Results

import bsplot

cmap = plt.cm.cividis

accent_blue = cmap(0.3)

accent_gold = cmap(0.85)

accent_mid = cmap(0.6)

# Get FC matrices from result structure (observations attached to simulation results)

fc_target = np.array(results.integration.observations.fc_target)

fc_post_fic = np.array(results.fic.post_tuning.observations.fc)

fc_post_fic_eib = np.array(results.fic_eib.post_tuning.observations.fc)

fc_gradient = np.array(results.gradient_eib.simulation.observations.fc)

# FC correlations from post-tuning observations

corr_post_fic = float(results.fic.post_tuning.observations.fc_corr)

corr_fic_eib = float(results.fic_eib.post_tuning.observations.fc_corr)

corr_gradient = float(results.gradient_eib.simulation.observations.fc_corr)

# 3 rows x 4 columns layout

mosaic = "AABBCCDD\nEEFFGGHH\nIIJJKKLL"

fig, axes = plt.subplot_mosaic(mosaic, figsize=(16, 12), layout="compressed")

# =============================================================================

# ROW 1: FIC Algorithm Results (Local E-I Balance Tuning)

# =============================================================================

# Number of time steps to show (720ms / 4ms dt = 180, matching original)

n_display = 180

# A: S_e Before FIC

ax = axes["A"]

data = np.array(results.fic.pre_tuning.data[:n_display, 0, :])

for i in range(data.shape[1]):

ax.plot(data[:, i], color=cmap(i / data.shape[1]), lw=0.5, alpha=0.6)

ax.axhline(0.25, color=accent_gold, ls="--", lw=2, label="Target")

ax.set(xlabel="Time step", ylabel="S_e", title="FIC: Before Tuning")

ax.set_ylim(0, 1)

ax.legend(loc="upper right")

# B: S_e After FIC

ax = axes["B"]

data = np.array(results.fic.post_tuning.data[:n_display, 0, :])

for i in range(data.shape[1]):

ax.plot(data[:, i], color=cmap(i / data.shape[1]), lw=0.5, alpha=0.6)

ax.axhline(0.25, color=accent_gold, ls="--", lw=2, label="Target")

ax.set(xlabel="Time step", ylabel="S_e", title="FIC: After Tuning")

ax.set_ylim(0, 1)

ax.legend(loc="upper right")

# C: FIC Convergence (Mean S_e over iterations)

ax = axes["C"]

ax.plot(

np.array(results.fic.history.mean_S_e), lw=2, color=accent_blue, label="Mean S_e"

)

ax.axhline(0.25, color=accent_gold, ls="--", lw=2, label="Target")

ax.set(xlabel="FIC iteration", ylabel="Mean S_e", title="FIC: Convergence")

ax.legend(loc="upper right")

ax.grid(True, alpha=0.3)

# D: FC Target (empirical)

ax = axes["D"]

np.fill_diagonal(fc_target, np.nan)

im = ax.imshow(fc_target, cmap="cividis", vmin=0, vmax=1)

ax.set(title="Target FC (Empirical)")

plt.colorbar(im, ax=ax, fraction=0.046)

# =============================================================================

# ROW 2: FIC+EIB Combined Algorithm Results

# =============================================================================

# E: FC after FIC+EIB

ax = axes["E"]

np.fill_diagonal(fc_post_fic_eib, np.nan)

im = ax.imshow(fc_post_fic_eib, cmap="cividis", vmin=0, vmax=1)

ax.set(title=f"FIC+EIB FC (r={corr_fic_eib:.3f})")

plt.colorbar(im, ax=ax, fraction=0.046)

# F: FIC+EIB Convergence (FC correlation)

ax = axes["F"]

ax2 = ax.twinx()

(l1,) = ax.plot(

np.array(results.fic_eib.history.fc_corr), lw=2, color=accent_blue, label="FC Corr"

)

(l2,) = ax2.plot(

np.array(results.fic_eib.history.fc_rmse), lw=2, color=accent_gold, label="FC RMSE"

)

ax.set(

xlabel="FIC+EIB iteration", ylabel="FC Correlation", title="FIC+EIB: Convergence"

)

ax2.set_ylabel("FC RMSE")

ax.legend(handles=[l1, l2], loc="center right")

ax.grid(True, alpha=0.3)

# G: FIC+EIB wLRE

ax = axes["G"]

wLRE = np.array(results.fic_eib.state.coupling.EIBLinearCoupling.wLRE)

im = ax.imshow(wLRE, cmap="cividis")

ax.set(title="FIC+EIB: wLRE")

plt.colorbar(im, ax=ax, fraction=0.046)

# H: FIC+EIB wFFI

ax = axes["H"]

wFFI = np.array(results.fic_eib.state.coupling.EIBLinearCoupling.wFFI)

im = ax.imshow(wFFI, cmap="cividis")

ax.set(title="FIC+EIB: wFFI")

plt.colorbar(im, ax=ax, fraction=0.046)

# =============================================================================

# ROW 3: Gradient-Based Optimization Results

# =============================================================================

# I: FC after Gradient Optimization

ax = axes["I"]

np.fill_diagonal(fc_gradient, np.nan)

im = ax.imshow(fc_gradient, cmap="cividis", vmin=0, vmax=1)

ax.set(title=f"Gradient FC (r={corr_gradient:.3f})")

plt.colorbar(im, ax=ax, fraction=0.046)

# J: Gradient Loss Convergence

ax = axes["J"]

# Access history directly - tvboptim optimizer stores loss in history['loss'].save

loss_values = np.array(results.gradient_eib.history["loss"].save.tolist())

ax.plot(loss_values, lw=2, color="k", alpha=0.9)

ax.scatter(0, loss_values[0], s=80, color=accent_blue, zorder=5)

ax.scatter(len(loss_values) - 1, loss_values[-1], s=80, color=accent_gold, zorder=5)

ax.set(

xlabel="Optimization Step",

ylabel="Combined Loss",

title="Gradient: Loss Convergence",

)

ax.grid(True, alpha=0.3)

# K: wLRE after Gradient

ax = axes["K"]

im = ax.imshow(

results.gradient_eib.state.coupling.EIBLinearCoupling.wLRE, cmap="cividis"

)

ax.set(title="Gradient: wLRE")

plt.colorbar(im, ax=ax, fraction=0.046)

# L: wFFI after Gradient

ax = axes["L"]

im = ax.imshow(

results.gradient_eib.state.coupling.EIBLinearCoupling.wFFI, cmap="cividis"

)

ax.set(title="Gradient: wFFI")

plt.colorbar(im, ax=ax, fraction=0.046)

bsplot.style.format_fig(fig)

plt.show()